Every once in a while, the TechEmpower Framework Benchmarks surface something that makes everyone involved squint at the numbers and say, “Wait… what?”

Round 23 had one of those.

On the face of it, nothing particularly dramatic had changed about the idea of the benchmarks. Same philosophy as always:

Choosing a web application framework involves evaluation of many factors. While comparatively easy to measure, performance is frequently given little consideration. We hope to help change that.

Application performance can be directly mapped to hosting dollars, and for companies both large and small, hosting costs can be a pain point. Weak performance can also cause premature and costly scale pain by requiring earlier optimization efforts and increased architectural complexity. Finally, slow applications yield poor user experience and may suffer penalties levied by search engines.

What if building an application on one framework meant that at the very best your hardware is suitable for one tenth as much load as it would be had you chosen a different framework?

That’s the north star for the project, and it hasn’t really changed.

What did change in R23 was that one set of tests, the spring-mongo implementations, looked much worse than anyone expected. Not “eh, that’s a little low” worse, but “this is probably pointing at a real problem” worse.

And because the internet is sometimes better than we deserve, the lead performance engineer at MongoDB noticed, reached out, and we ended up on a little adventure together.

MongoDB’s Java story in the benchmarks is represented by spring-mongo (a Spring-based implementation) as well as a few Node.js variants. In Round 22, the spring-mongo tests were not the fastest things on the planet, but they were in a reasonable ballpark.

By round 23, some of the spring-mongo results in our continuous benchmark environment (Citrine) had fallen off a cliff.

For one of the core tests (query) the numbers looked roughly like this (requests per second, spring-mongo only):

- Round 22: ~5.9k

- Round 23 baseline: ~583

That’s not noise. That’s a smoking gun.

At the same time, other frameworks and other MongoDB-based implementations weren’t seeing that same kind of collapse. That’s usually a hint that something environmental or configuration-related is going wrong, rather than “MongoDB suddenly became slow” or “Spring suddenly can’t talk to the database.”

This is where Ger Hartnett from MongoDB enters the story. Ger is the lead performance engineer at MongoDB, and he did exactly what I wish more vendors would do: he treated the weird benchmark as a bug report.

He emailed me and, very politely, started asking all the questions you’d expect a performance engineer to ask:

- “What’s the host hardware?” We’re running on a single Xeon 6330 box with 56 hyper-threaded cores.

- “What OD and kernel versions were used for R22 and R23?” Ubuntu 22.04 for R22 and 24.04 for R23; the host OS had also moved forward over time, and we hadn’t been explicitly encoding that in the results yet.

- “Can we get logs for

spring-mongo?” We recommend against enabling logging for “round runs” (I/O eats CPU), but the benchmark harness and public status pages make it possible to turn them on temporarily (via pull request) and then correlate them with specific continuous runs.

That kicked off a back-and-forth where we coordinated on where and how their team could safely tweak the spring-mongo implementations.

Meanwhile, MongoDB pulled in Intel to help analyze what was happening on their own lab hardware, including runs with and without “performance mode” enabled at the platform level.

So we now had three axes of investigation:

- The application and its configuration (the

spring-mongocode and connection settings)

- The database process and allocator behavior (MongoDB itself under Docker)

- The platform-level configuration (power/performance modes, etc., analyzed with tools like PerfSpect)

The team ended up sending two pull requests:

- #10514 – a workaround for a MongoDB server issue involving TCMalloc per-CPU memory pools when running inside Docker containers.

- #10565 – changes to the way the

spring-mongoclient connects to the database, including adjustments tomaxPoolSizeand related options so the driver can actually make use of the available hardware.

Once both PRs were in and our continuous benchmarking pipeline had cranked through them, Ger sent a summary email with the numbers for spring-mongo:

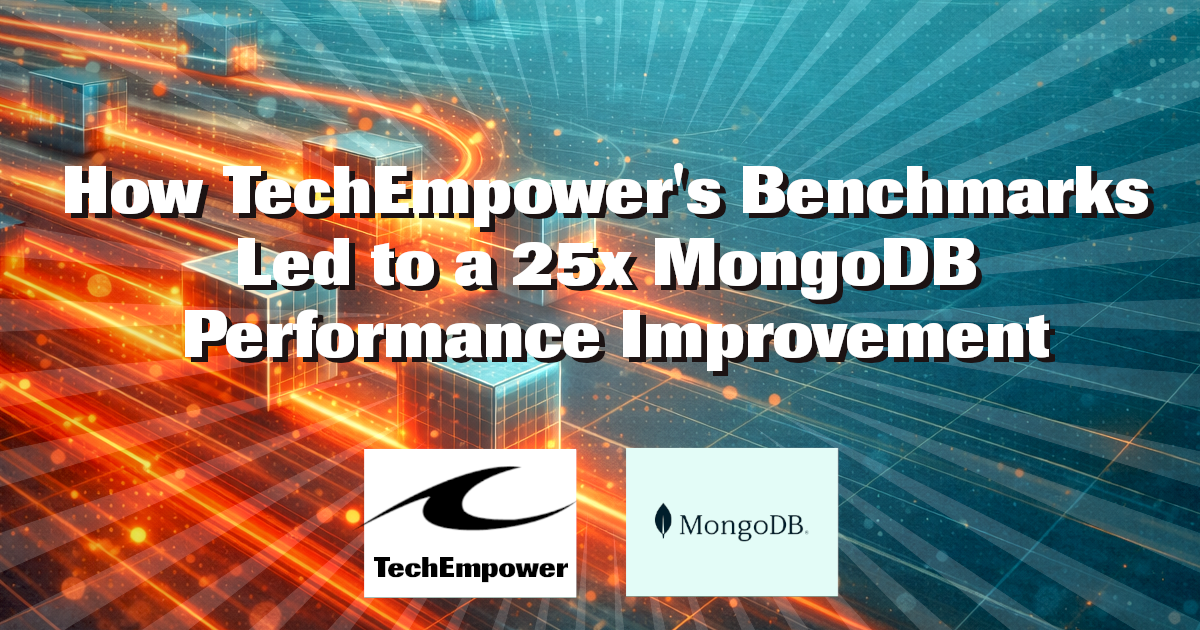

| Test | R22 | R23 Baseline | TCMalloc Fix Only | ConnString \+ TCMalloc |

|---|---|---|---|---|

query |

5.9k | 583 | 837 | 14.2k |

db |

104k | 68k | 192k | 205k |

fortune |

22k | 59k | 167k | 188k |

update |

2.4k | 528 | 795 | 10.2k |

The query test went from 583 to 14.2k in our environment. That’s roughly a 25x relative to the Round 23 baseline, and significantly better than the Round 22 result. The other tests also improved substantially, especially once the allocator and pool configuration were both in a sane place.

This was not just about our benchmark setup. MongoDB and Intel ran the same workload in their own environments, with and without CPU “performance mode,” and saw similar patterns: the combination fixes make a huge difference.

I like stories where everyone comes out looking better in the end. MongoDB has a much stronger showing in the benchmarks, the benchmark implementations themselves improve as reference examples, and if you’re out there picking a stack for your next project, you get numbers that are closer to what’s actually possible when people who care about performance have had a chance to sharpen the edges.