Their contribution allowed us to continue testing on physical hardware with 10-gigabit Ethernet. Ten-gigabit Ethernet gives the highest-performing frameworks opportunity to shine. We were particularly impressed at Server Central’s customer service and technical support, which was responsive and helpful in troubleshooting configuration issues even though we were using their servers free of charge. (And since the advent of our Continuous Benchmarking, we were essentially using the servers at full load around the clock.)

Thank you, Server Central!

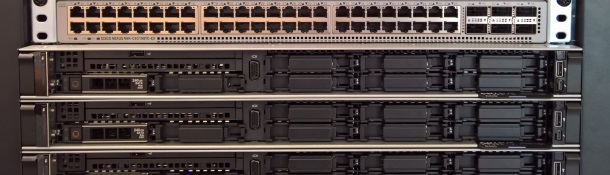

New hardware for Round 16 and beyond

For Round 16 and beyond, we are happy to announce that Microsoft has provided three Dell R440 servers and a Cisco 10-gigabit switch. These three servers are homogeneous, each configured with an Intel Xeon Gold 5120 CPU (14/28 cores at 2.2/3.2 GHz), 32 GB of memory, and an enterprise SSD.

If your contributed framework or platform performs best with hand-tuning based on cores, please send us a pull request to adjust the necessary parameters.

These servers together compose a hardware environment we’ve named “Citrine” and are visible on the TFB Results Dashboard. Initial results are impressive, to say the least.

Adopting Docker for Round 16

Concurrent to the change in hardware, we are hard at work converting all test implementations and the test suite to use Docker. The are several upsides to this change, the most important being better isolation. Our past home-brew mechanisms to clean up after each framework were, at times, akin to whack-a-mole as we encountered new and fascinating ways in which software may refuse to stop after being subjected to severe levels of load.

Docker will be used uniformly—across all test implementations—so any impact will be imparted on all platforms and frameworks equally. Our measurements indicate trivial performance impact versus bare metal: on the order of less than 1%.

As you might imagine, the level of effort to convert all test implementations to Docker is not small. We are making steady progress. But we would gladly accept contributions from the community. If you would like to participate in the effort, please see GitHub issue #3296.